Machine learning has transitioned from a niche technical pursuit to one with mass appeal. Thanks to the accessibility of modern tools, it's now remarkably easy to get started—upload a dataset, import a library, and train a model with just a few lines of code. Yet this ease of use masks the underlying complexities of doing machine learning well .

The uncomfortable truth is that most machine learning projects fail. According to recent industry data, 42% of AI projects never make it past the pilot phase, and Gartner predicts that 30% of generative AI projects will be abandoned after proof-of-concept by the end of 2025 . These failures aren't usually due to limitations of the technology itself—they stem from common, avoidable mistakes that plague both beginners and experienced practitioners.

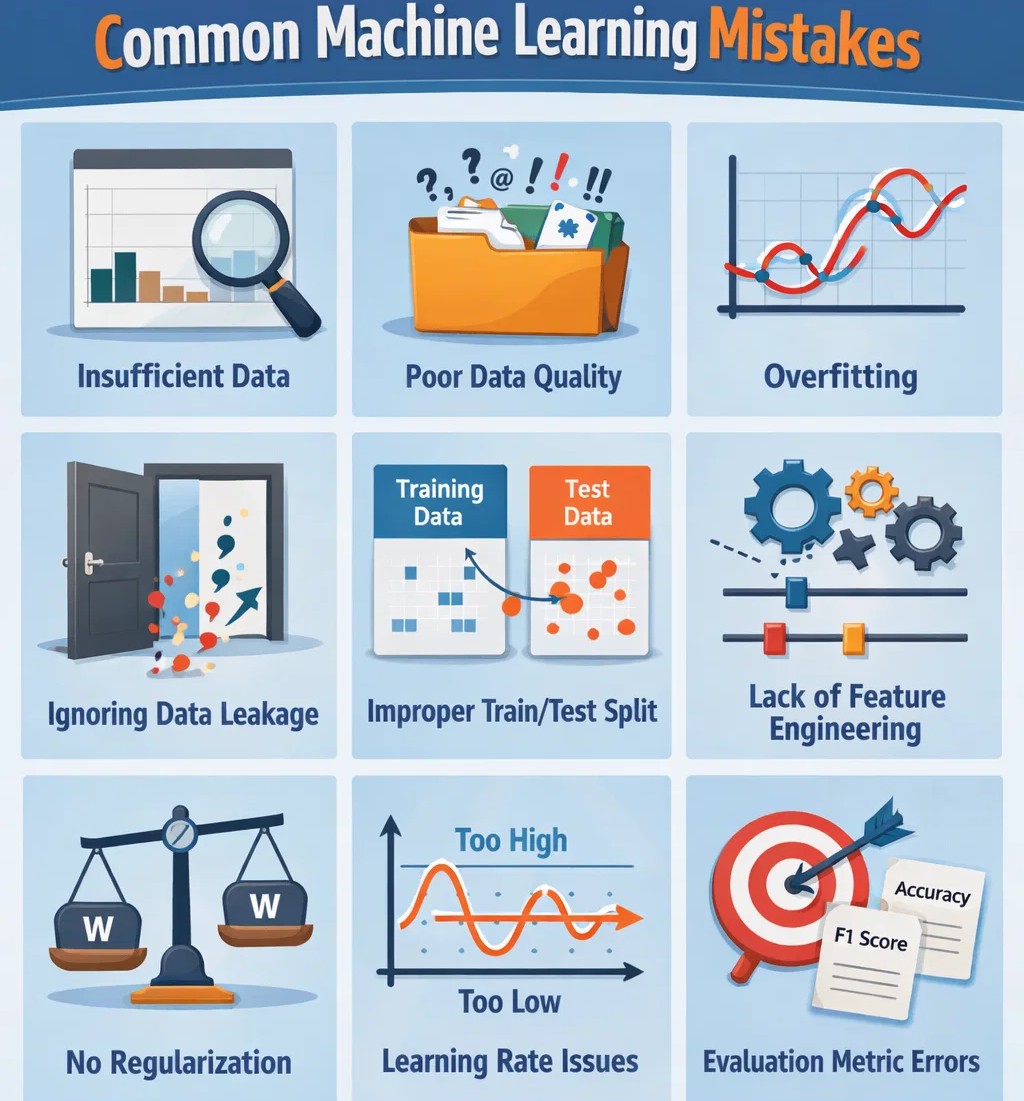

This comprehensive guide will walk you through the most frequent beginner ML errors, teach you how to debug ML projects effectively, and provide practical solutions to keep your models on track. Whether you're building your first classifier or deploying models in production, understanding these pitfalls will save you countless hours of frustration and dramatically improve your outcomes.

Let's dive into the five stages where mistakes most commonly occur: before building models, during training, during evaluation, when comparing models, and in production deployment.

The High Cost of Machine Learning Mistakes

Before we explore specific errors, it's worth understanding why these mistakes matter so much. Poor machine learning practice doesn't just mean wasted time—it leads to:

-

Failed projects: Models that work in notebooks but fail in the real world

-

Eroded trust: Stakeholders lose confidence when models underperform

-

Reproducibility crises: Other researchers can't replicate your findings

-

Financial losses: Wasted compute resources and missed opportunities

-

Reputational damage: Products that make embarrassing or harmful predictions

As one researcher notes, "Mistakes in machine learning practice are commonplace and can result in loss of confidence in the findings and products of machine learning" . The good news is that most of these mistakes are entirely avoidable with proper awareness and process.

Stage 1: Before You Build Models—Data and Strategy Mistakes

Mistake 1: Starting with Technology, Not a Problem

The most fundamental error in machine learning projects is beginning with "let's use ML on this data" rather than identifying a clear business problem. Many initiatives fail because they start as a technology looking for a problem .

The Fix: Before writing a single line of code, ask yourself:

-

What specific question am I trying to answer?

-

Will the answer to this question drive a decision or action?

-

Is machine learning actually the right tool for this job?

-

How will I measure success? (Define your success metrics before you start)

As Beam AI's analysis notes, "Without a proper readiness assessment, companies often choose tasks that are either too complex for current tech or offer no real value" .

Mistake 2: Failing to Understand Your Data

It's tempting to rush straight into model building, but taking time to understand your data is essential. Researchers emphasize that you must "do take the time to understand your data" before any modeling begins .

Common data understanding failures include:

-

Assuming a dataset is high-quality because it's widely used

-

Not knowing the origin or collection methodology of your data

-

Failing to identify missing values, duplicates, or inconsistencies

-

Not understanding the limitations or biases in your data

The Fix: Perform thorough exploratory data analysis (EDA). Visualize distributions, check for missing values, understand correlations, and document everything. Remember: "If you train your model using bad data, then you will most likely generate a bad model: a process known as 'garbage in garbage out'" .

Mistake 3: Using Low-Quality Data

Low-quality data severely limits AI model training, especially in deep learning. Common data quality issues include :

Missing or incomplete data: If substantial data is missing, it becomes difficult to train accurate, reliable models.

Noisy data: Data containing significant noise (outliers, errors, irrelevant information) introduces bias, reduces accuracy, and negatively impacts model performance.

Unrepresentative data: If training data doesn't represent the problem or task the model is meant to solve, it leads to poor generalization.

The Fix: Implement proper data governance, integration, and exploration. "Through data governance, data integration and data exploration, carefully evaluate and define the data scope to ensure data quality" .

Mistake 4: Ignoring Outliers

Failing to identify and handle outliers is among the most common deep learning errors. Outliers—especially extreme cases—can significantly impact neural networks, reducing accuracy, introducing bias, and increasing variance .

Sometimes outliers are just noise (requiring cleaning). Other times, they signal more serious issues. Either way, ignoring them leads to poor predictions.

The Fix: Handle outliers systematically using :

-

Statistical methods (z-score, hypothesis testing)

-

Transformation techniques (Box-Cox transform, median filtering)

-

Robust estimators (median instead of mean, trimmed means)

Mistake 5: Looking at All Your Data Before Splitting

When you examine data, you naturally spot patterns that guide your modeling. This is valuable—but dangerous if you look at test data during exploration. As researchers warn: "You should avoid looking closely at any test data in the initial exploratory analysis stage. Otherwise, you might, consciously or unconsciously, make assumptions that limit the generality of your model in an untestable way" .

The Fix: Split your data into training, validation, and test sets before any exploratory analysis. Only explore the training data. Keep test data completely untouched until final evaluation.

Mistake 6: Using Inadequate Hardware

Deep learning models process enormous amounts of data. Many older systems simply can't handle this pressure. Using underpowered hardware—whether through limited computing resources, insufficient memory, or inadequate parallelization—impairs model training performance .

The era of hundreds of CPUs is over. Modern GPU computing enables parallel processing of millions of calculations needed for robust model training.

The Fix: Ensure adequate hardware for your task. Large AI models need substantial memory, especially when processing large datasets. "Don't be stingy with memory, because out-of-memory errors can have serious consequences after training starts, forcing you to start over" .

Stage 2: Building Models—Architecture and Training Mistakes

Mistake 7: Dataset Size Problems—Too Big or Too Small

Dataset size significantly impacts deep learning model training. Generally, larger datasets enable better performance because models can learn deeper patterns and generalize better. However, size alone isn't enough—data must also be high-quality and diverse .

Too small: Models lack sufficient examples for learning, leading to overfitting—performing well on training data but poorly on new data.

Too large: Excessively large datasets can cause underfitting—models become too complex to learn underlying patterns, performing poorly on both training and test data.

The Fix: Find the optimal balance—enough samples for learning without excessive computational burden. Ensure diversity and quality regardless of size.

Mistake 8: Inadequate Data Preprocessing

Proper data preprocessing isn't optional—it's essential for building reliable models. As the Machine Learning Mastery guide emphasizes, "Proper data preprocessing is not something to be overlooked for building reliable machine learning models" .

Key preprocessing failures:

-

Not handling missing values properly

-

Failing to remove duplicates

-

Ignoring inconsistent records

-

Not scaling or normalizing features appropriately

The Fix: Clean your data systematically. For numeric columns, handle missing values with median imputation. For categorical columns, use mode imputation. Scale features so all contribute proportionally to model learning .

Mistake 9: Data Leakage During Preprocessing

When you preprocess data, it's crucial to avoid leaking information from the test set into training. Common examples include:

-

Scaling data before splitting (test set statistics influence training)

-

Using the entire dataset for imputation before splitting

-

Doing data augmentation before splitting (creating duplicates across train/test)

The Fix: Always fit preprocessing parameters (scaling means, imputation values) on training data only, then transform validation and test data using those fitted parameters. Split first, preprocess second.

Mistake 10: Allowing Test Data to Leak into Training

Test data leakage—where information from the test set influences model training—is "a common reason why ML models fail to generalize" . This can happen in subtle ways:

-

Using test data for hyperparameter tuning

-

Looking at test data during exploratory analysis

-

Making modeling decisions based on test set performance

The Fix: Treat test data as completely inaccessible until final evaluation. Use separate validation sets for model development and tuning. Only touch test data once, at the very end.

Mistake 11: Overfitting Without Proper Validation

Overfitting occurs when a model performs well on training data but poorly on new, unseen data. This is a universal struggle for beginners .

The Fix: Use k-fold cross-validation. This technique divides your data into k subsets, trains the model k times—each time using a different subset for validation and the remaining for training. Cross-validation provides a robust estimate of model performance and helps detect overfitting .

Mistake 12: Trying to Make Your First Model Your Best Model

When starting with deep learning, it's tempting to try building a single model that handles all required tasks. This approach typically fails because different models have different strengths .

For example, decision trees excel at predicting categorical data, especially when components lack obvious relationships. But they struggle with regression problems. Logistic regression handles purely numerical data well but performs poorly on classification tasks.

The Fix: Embrace iteration and variety. "Iteration and adaptation are the best tools for obtaining reliable results. While it may be tempting to build once and reuse, this stagnates results and may cause users to overlook many other possible outputs" .

Mistake 13: Reusing the Same Model Repeatedly

Training one deep learning model and repeatedly reusing it might seem efficient—but it's counterproductive. By training multiple iterations and variants of models, you collect statistically significant, usable data .

If you only train one model and reuse it, you end up with standardized results that repeat every time. This may cause research to miss opportunities from diverse datasets that could yield more valuable insights.

The Fix: Train multiple models on diverse datasets. See how different models interpret data differently. For deep learning, this diversity teaches algorithms to produce varied outputs rather than identical or similar predictions.

Mistake 14: Poor Hyperparameter Tuning

Hyperparameters dramatically impact model performance. Beginners often either ignore tuning entirely or approach it haphazardly.

The Fix: Use systematic hyperparameter optimization :

-

Grid Search: Exhaustively search through specified parameter grids

-

Random Search: Sample parameter settings randomly within defined ranges

Multi-metric evaluation (using both accuracy and F1 score, especially for imbalanced datasets) helps ensure robust model selection.

Stage 3: Evaluating Models—Measurement Mistakes

Mistake 15: Choosing the Wrong Metrics

Selecting appropriate evaluation metrics is essential for accurate model assessment. With imbalanced classes, accuracy can be misleading .

The Fix: Align metrics with project goals :

-

For imbalanced classification: precision, recall, F1 score

-

For regression: mean squared error, R-squared

-

For ranking problems: AUC-ROC, mean average precision

Mistake 16: Not Saving Data for Final Evaluation

When using cross-validation during model development, you might exhaust all data—leaving nothing for final evaluation of your chosen model instance. This is a subtle but serious mistake .

The Fix: "Do save some data to evaluate your final model instance." Set aside a completely untouched test set before any modeling begins. Only use it once, at the very end, to estimate real-world performance.

Mistake 17: Failing to Correct for Multiple Comparisons

During model development, you'll likely try many approaches—different algorithms, hyperparameters, preprocessing combinations. Each comparison increases the chance of finding something that looks good by random chance .

The Fix: "Do correct for multiple comparisons." If you make many comparisons, your validation set becomes less useful for guiding decisions. Consider setting aside multiple validation sets or using statistical corrections for multiple hypothesis testing.

Mistake 18: Using Inappropriate Test Sets

Even a carefully held-out test set may not adequately measure model generality if it shares the same biases as your training data .

The Fix: "Do use an appropriate test set" and "do report performance in multiple ways." In practice, you may need multiple test datasets from different sources to robustly evaluate your model's ability to generalize.

Mistake 19: Not Checking Model Reproducibility

A model that can't be reproduced isn't trustworthy. Yet many practitioners fail to set random seeds, document preprocessing steps, or version their code and data.

The Fix: Make reproducibility a priority:

-

Set random seeds for all stochastic processes

-

Document every preprocessing decision

-

Use version control for code AND data

-

Record hyperparameters for every experiment

Stage 4: Comparing Models—Fair Comparison Mistakes

Mistake 20: Unfair Model Comparisons

When comparing models, it's easy to introduce bias inadvertently. Common unfair practices include:

-

Comparing your tuned model against baselines with default parameters

-

Using different train/test splits for different models

-

Reporting only the best run without averaging across multiple runs

The Fix: Standardize your comparison protocol. Use identical train/test splits. Tune all models (not just yours). Report means and standard deviations across multiple runs.

Mistake 21: Believing Community Benchmarks Blindly

Widely used benchmark datasets often have hidden limitations. "Some widely used datasets are known to have significant limitations—sometimes data are used just because they are easy to get hold of" .

The Fix: Build relationships with domain experts who generate data. This increases likelihood of obtaining high-quality datasets that meet your needs and avoids overfitting to community benchmarks.

Mistake 22: Not Accounting for Data Drift

Models begin degrading the moment they encounter real-world data. Many teams treat model deployment as a one-time event, failing to plan for ongoing monitoring .

The Fix: Establish robust MLOps pipelines with :

-

Model monitoring and observability (identify performance drops before they affect users)

-

Data drift detection (recognize when real-world data no longer matches training data)

-

Model retraining strategy (establish lifecycle that keeps AI relevant)

Stage 5: Production and Security—Deployment Mistakes

Mistake 23: Security Gaps in ML Pipelines

In 2026, ML pipeline security remains alarmingly immature. As Chainguard's analysis notes, "While infrastructure for training and deploying models in production is improving, creating an end-to-end ML pipeline in 2026 is fraught with footguns for the unwary ML Ops engineer" .

Critical security gaps include:

Pickle deserialization risks: Pickle files remain the default serialization format in PyTorch. When deserialized, they can execute arbitrary Python code—a massive security vulnerability. Safe formats like safetensors exist but aren't yet default .

Model signing and provenance gaps: AutoML frameworks don't encourage signing within pipelines. Models on popular repositories like Hugging Face rarely come with cryptographically verifiable metadata. "ML Ops practitioners concerned about security in 2026 should closely follow SLSA guidelines on signing and verifying signatures" .

Supply chain vulnerabilities: ML pipelines depend heavily on ecosystem packages (PyPI, npm, Maven Central). This creates significant supply chain security exposure. "This heavy dependence on ecosystem packages means the average ML pipeline is unusually exposed to software supply chain security risk" .

Container security issues: AI/ML container images tend to be large, complex, and built on general-purpose base images with thousands of packages—many unnecessary for training or inference. This creates a broad attack surface .

The Fix: Implement security best practices :

-

Use safetensors instead of pickle where possible

-

Sign model artifacts and verify signatures before use

-

Pin dependencies and use cooling-off periods before allowing new packages into production

-

Remove unnecessary packages and minimize attack surface

Mistake 24: Ignoring LLM-Specific Risks

As we move toward agentic AI and LLM-powered applications, new risks emerge. Unlike static bots, agentic automation takes actions, introducing new layers of risk .

Critical concerns:

-

Hallucination mitigation

-

Prompt injection protection

-

Responsible AI governance

The Fix: "Successfully implementing agentic process automation requires a sophisticated approach to workflow orchestration to ensure these agents remain within the bounds of responsible AI governance" .

Mistake 25: Treating AI Productionization as One-Time Event

Many organizations treat model deployment as a finish line. In reality, it's just the beginning. Models degrade, data drifts, and requirements change.

The Fix: Treat AI as a living system requiring ongoing attention. Establish centers of excellence that standardize how AI integrations are handled across the company . Invest in monitoring, observability, and retraining infrastructure.

Mistake 26: Integration Errors with Legacy Systems

When organizations upgrade to deep learning, they often already have existing machines they want to use. However, integrating cutting-edge AI into older technologies and systems—both physical and data systems—presents significant challenges .

The Fix: Maintain accurate documentation and consider redesigning hardware and datasets as needed. Work with implementation partners to simplify deployment of services like anomaly detection and predictive analytics .

Mistake 27: Failing to Define Success Metrics

"If you don't define your AI success metrics early on, you can't prove the value realization. When the budget cycle comes around, these 'zombie projects' are the first to be cut because no one can quantify their impact" .

The Fix: Define clear, measurable success criteria before starting. Tie these metrics to business outcomes, not just technical performance. Track them consistently throughout the project lifecycle.

Debugging ML Projects: Tools and Techniques

Systematic Debugging Approaches

When something goes wrong with an ML project, systematic debugging is essential. A pattern-oriented approach helps diagnose and debug abnormal software structure and behavior .

Key debugging stages:

-

Diagnostics before debugging—identify problems in memory dumps, traces, and logs

-

Analysis patterns—recognize common failure modes

-

Implementation patterns—apply proven fixes

Common issues to debug include environmental problems, crashes, hangs, resource spikes, leaks, and performance degradation .

Debugging During Training

Modern ML tools enable single-step debugging during model training. Using Visual Studio Code, you can set breakpoints in your model specification script and step through execution to identify issues .

Setup steps:

-

Install MLTK and VS Code Python extension

-

Configure Python interpreter to point to your ML environment

-

Set breakpoints in your model script

-

Create launch configuration for debugging

-

Step through code to inspect variables and execution flow

For debugging data generators, set the debug=True option to enable detailed inspection .

Monitoring and Observability

Once models are in production, monitoring becomes critical. Key metrics to track :

-

Prediction accuracy over time

-

Feature distributions (for drift detection)

-

Latency and throughput

-

Resource utilization

When performance drops, observability tools help trace the cause—whether data drift, infrastructure issues, or model degradation.

A Practical Framework for Avoiding ML Mistakes

Before You Start

-

Define the problem clearly—What business question are you answering?

-

Set success metrics—How will you measure value?

-

Understand your data—Source, quality, limitations, biases

-

Split data first—Training, validation, test sets before any exploration

During Development

-

Clean systematically—Handle missing values, outliers, duplicates

-

Preprocess without leakage—Fit on train only, transform others

-

Validate robustly—Cross-validation, multiple validation sets if needed

-

Tune systematically—Grid search or random search with cross-validation

-

Track experiments—Record every parameter, preprocessing decision, and result

During Evaluation

-

Choose appropriate metrics—Match to problem type and business goals

-

Use untouched test data—Only once, at the very end

-

Report uncertainty—Confidence intervals, not just point estimates

-

Test generalization—Multiple test sets if possible

For Production

-

Secure your pipeline—Safe serialization, signing, dependency management

-

Plan for monitoring—Data drift, performance degradation

-

Establish retraining cadence—When and how to update models

-

Document everything—For reproducibility and debugging

Common Code Patterns for Prevention

Here's a practical example of proper preprocessing with safety checks :

import pandas as pd from sklearn.preprocessing import StandardScaler import numpy as np try: df = pd.read_csv('data.csv') # Check missing values pattern missing_pattern = df.isnull().sum() missing_percentage = (df.isnull().sum() / len(df)) * 100 # Identify columns with high missing percentages high_missing_cols = missing_percentage[missing_percentage > 50].index # Identify numeric and categorical columns numeric_columns = df.select_dtypes(include=[np.number]).columns categorical_columns = df.select_dtypes(include=['object']).columns # Handle missing values appropriately df[numeric_columns] = df[numeric_columns].fillna(df[numeric_columns].median()) df[categorical_columns] = df[categorical_columns].fillna(df[categorical_columns].mode().iloc[0]) # Scale numeric features scaler = StandardScaler() df[numeric_columns] = scaler.fit_transform(df[numeric_columns]) except FileNotFoundError: print("Data file not found") except Exception as e: print(f"Error processing data: {e}")

And here's robust cross-validation with stratified folds :

from sklearn.model_selection import cross_val_score, StratifiedKFold from sklearn.ensemble import RandomForestClassifier model = RandomForestClassifier(n_estimators=100, random_state=42) skf = StratifiedKFold(n_splits=5, shuffle=True, random_state=42) scores = cross_val_score(model, X_scaled, y, cv=skf, scoring='accuracy') print(f"CV scores: {scores}") print(f"Mean: {scores.mean():.3f} (±{scores.std() * 2:.3f})")

Conclusion: Building Better Machine Learning Practice

Machine learning is both powerful and accessible in 2026, but that accessibility masks complexity. As one researcher notes, "the ease of use masks the underlying complexities of doing machine learning. This, coupled with a relatively inexperienced community of practitioners, has led to flawed practices, which are reflected in issues such as poor reproducibility within machine-learning-based studies" .

The good news is that most common mistakes are avoidable. By understanding the pitfalls outlined in this guide—from data quality issues and overfitting to security vulnerabilities and integration errors—you can dramatically improve your project outcomes.

Key takeaways for each stage of your ML journey:

For beginners: Focus on data quality first. Most model problems trace back to data issues. Master preprocessing before diving into complex architectures.

For practitioners: Develop systematic validation and evaluation practices. Cross-validation isn't optional—it's essential for understanding model performance.

For those deploying models: Security and monitoring are non-negotiable. The industry is still catching up to production ML needs—don't assume tools handle security by default.

For everyone: Embrace iteration. Your first model won't be your best. Train multiple variants, learn from failures, and continuously improve.

As the field matures, we can expect better tools, standardization, and regulation to address these issues . But until then, awareness and rigorous practice remain our best defenses against common machine learning mistakes.

The path to mastery in machine learning isn't about avoiding errors entirely—it's about recognizing them quickly, understanding their causes, and building systems that prevent them from recurring. With the framework and techniques in this guide, you're equipped to do exactly that.

Your next step? Review your current ML projects through the lens of these common mistakes. Check your data preprocessing, validation approach, evaluation metrics, and deployment practices. Identify one area for improvement and address it today. Small fixes compound into significantly better outcomes over time.

Comments

No comments yet. Be the first to comment.

Leave a Comment